In the ever-evolving landscape of artificial intelligence (AI), one concept that has gained significant traction is Explainable Artificial Intelligence (XAI). As we plunge deeper into the realms of machine learning and AI applications, the need for transparency and interpretability in AI systems becomes paramount. In this article, we will delve into the intricacies of Explainable AI, exploring its importance, key components, and addressing common questions surrounding this revolutionary field.

The Rise of Explainable Artificial Intelligence (XAI)

Artificial Intelligence has become an integral part of our daily lives, impacting various industries from healthcare to finance. However, the black-box nature of many AI models raises concerns about accountability, trust, and ethical implications. This has led to the emergence of Explainable AI, a paradigm that seeks to demystify the decision-making processes of AI systems.

The Need for Transparency

In critical applications such as healthcare diagnosis or financial risk assessment, understanding the reasoning behind AI-generated decisions is crucial. Traditional AI models often lack transparency, leaving end-users and even developers in the dark regarding the factors influencing outcomes. Explainable AI aims to bridge this gap by providing insights into the decision-making mechanisms of complex models.

Key Components of Explainable AI

Explainable AI comprises various techniques and methodologies designed to unravel the intricacies of AI systems. Understanding these components is essential for both developers and end-users seeking to harness the power of AI responsibly.

Interpretable Models

One approach to achieving explainability is to utilize inherently interpretable models. These models, such as decision trees or linear regression, offer transparency by their nature. While they might not match the complexity of deep neural networks, their simplicity facilitates comprehension, making them suitable for certain applications.

Trade-Offs and Considerations

Despite the clarity provided by interpretable models, there are trade-offs in terms of predictive accuracy and the ability to handle complex patterns. Striking a balance between interpretability and performance remains a challenge in the development of AI systems.

Post-hoc Explainability Techniques

For existing complex models, post-hoc explainability techniques come into play. These methods aim to provide explanations after the model has made a prediction. Techniques like LIME (Local Interpretable Model-agnostic Explanations) generate simplified, locally faithful models to explain the predictions of black-box models.

Addressing the Black-Box Dilemma

Post-hoc explainability is particularly valuable in scenarios where the use of inherently interpretable models is not feasible due to the complexity of the underlying data. By shedding light on the decision boundaries of complex models, these techniques contribute to the overall transparency of AI systems.

Ethical Considerations in Explainable AI

The ethical dimensions of AI and machine learning cannot be overstated. As we navigate the landscape of Explainable AI, it is crucial to address ethical considerations to ensure responsible development and deployment of AI systems.

Bias and Fairness

Explainability plays a pivotal role in identifying and mitigating biases within AI models. By making the decision-making process transparent, developers can pinpoint and rectify biases that might emerge during the training phase, promoting fairness in AI applications.

Accountability and Trust

In applications where the consequences of AI decisions are profound, establishing accountability is imperative. Explainable AI not only fosters trust by providing understandable insights but also enables accountability in cases where decisions impact individuals’ lives, such as in medical diagnoses or autonomous vehicles.

Comparing Explainable Artificial Intelligence (XAI) and Generative AI: Striking a Balance Between Transparency and Creativity

In the ever-evolving realm of artificial intelligence, two distinct paradigms, Explainable Artificial Intelligence (XAI) and Generative AI, showcase the diversity of applications and objectives within this field.

Explainable Artificial Intelligence (XAI): Unveiling Decision-Making Processes

Explainable Artificial Intelligence is a paradigm designed to enhance transparency in AI systems. It focuses on unraveling the decision-making processes of complex models, ensuring that the reasoning behind AI-generated outcomes is comprehensible. XAI is particularly vital in critical domains like healthcare and finance, where understanding the rationale behind AI decisions is crucial for responsible and accountable applications. Interpretable models and post-hoc techniques play key roles in achieving transparency within XAI, offering a trade-off between predictive accuracy and clarity.

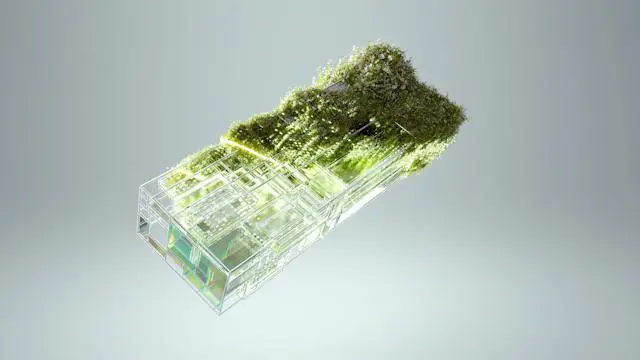

Generative AI: Fostering Creativity and Innovation

On the flip side, Generative AI is all about unleashing creativity within AI systems. Unlike XAI, which emphasizes transparency, Generative AI aims to create novel content, whether it be in the form of images, text, or even music. This paradigm leverages deep neural networks to generate content that is not explicitly programmed, showcasing the potential for AI to become a tool for artistic expression and innovation. However, the challenge lies in maintaining control over the generated content and ensuring ethical use, especially when creativity involves sensitive or controversial topics.

While Explainable Artificial Intelligence focuses on transparency and accountability in decision-making, Generative AI opens the door to AI-driven creativity and innovation. Striking a balance between these two paradigms is essential in harnessing the full potential of artificial intelligence, ensuring it not only produces creative outputs but does so in a transparent and responsible manner.

Frequently Asked Questions (FAQs)

Q1: What is the significance of Explainable AI in real-world applications?

Explainable AI is crucial in real-world applications as it enhances transparency, accountability, and trust. In critical domains like healthcare and finance, understanding the decision-making process of AI systems is essential for responsible use.

Q2: How do interpretable models differ from complex models in Explainable AI?

Interpretable models, such as decision trees, are inherently transparent, making them easier to understand. In contrast, complex models like deep neural networks offer higher predictive accuracy but often operate as black boxes, requiring post-hoc techniques for explanation.

Q3: Can Explainable AI address bias in machine learning models?

Yes, Explainable AI can play a significant role in identifying and mitigating bias in machine learning models. By making the decision-making process transparent, biases can be detected and rectified during the development phase.

Q4: Are there trade-offs between interpretability and predictive accuracy in AI models?

Indeed, there are trade-offs between interpretability and predictive accuracy. Interpretable models may lack the complexity to capture intricate patterns, while highly accurate complex models often operate as black boxes. Striking a balance is crucial for effective AI applications.

Conclusion

In the dynamic landscape of artificial intelligence, Explainable AI emerges as a beacon of transparency and accountability. By unraveling the intricacies of AI decision-making, this paradigm not only addresses ethical concerns but also fosters trust in AI applications. From interpretable models to post-hoc explainability techniques, the toolkit of Explainable AI provides diverse options for developers to create responsible and understandable AI systems.

In the pursuit of advancing technology, it is essential to prioritize ethical considerations, ensuring that AI benefits society without compromising fairness and accountability. As we navigate the evolving field of Explainable AI, it becomes clear that the synergy between technological innovation and ethical responsibility is paramount for a future where AI is a force for good.